from signals to strategy

An outcome-based framework for measurement and prioritization to turn signals into shared strategy.

this case study has been condensed with all confidential information removed. if you would like to learn more about this design and process, please contact me.

Overview

The organization regularly tracked business metrics to evaluate overall success, including revenue, individuals trained, number of digital subscribers, and CSAT scores. While this data was valuable in understanding high-level customer trends in growth, adoption, and satisfaction, it wasn’t directly actionable.

This resulted in senior managers feeling that they lacked the reliable diagnostic data needed to identify the underlying factors driving business metrics. This resulted in opinion-driven circular debates, extended product release cycles (6-8 sprints average), and misaligned product investments.

The organization needed a shared measurement system grounded in customer outcomes with the diagnostic precision to act on it. To address this, I led the development of the CXO (Customer Experience Outcomes) Framework, which was was built and adopted in 12 months.

Problem

1 — No shared definition of success

Each product team tracked its own metrics in its own way. Revenue, individuals trained, NPS, CSAT — all useful directionally, but none of them could answer the question that mattered: are customers accomplishing what they came here to do? When NPS dropped and open-text feedback said "hard to find what I need," it was difficult to pinpoint whether the issue was navigation, search, or content gaps. The metrics could show that something was off. They couldn't show what.

2 — No connection between metrics and product decisions

Even when teams had data, it didn't map to anything actionable. Revenue showed financial movement but not why people subscribed or left. Completion counts reflected activity without measuring value delivered. Satisfaction scores captured sentiment without structured feedback that could point to a specific product flow or feature. The gap between "we see a problem" and "here's what to fix" was where conversations needed more support.

3 — No way to compare or prioritize across products

With so many teams using different definitions and different instruments, there was no way to look across the portfolio and make informed tradeoffs. Leadership couldn't answer basic allocation questions: which products have the largest experience gaps? Where would investment have the most impact? Prioritization conversations were harder than they needed to be because there was no shared frame of reference.

Solution

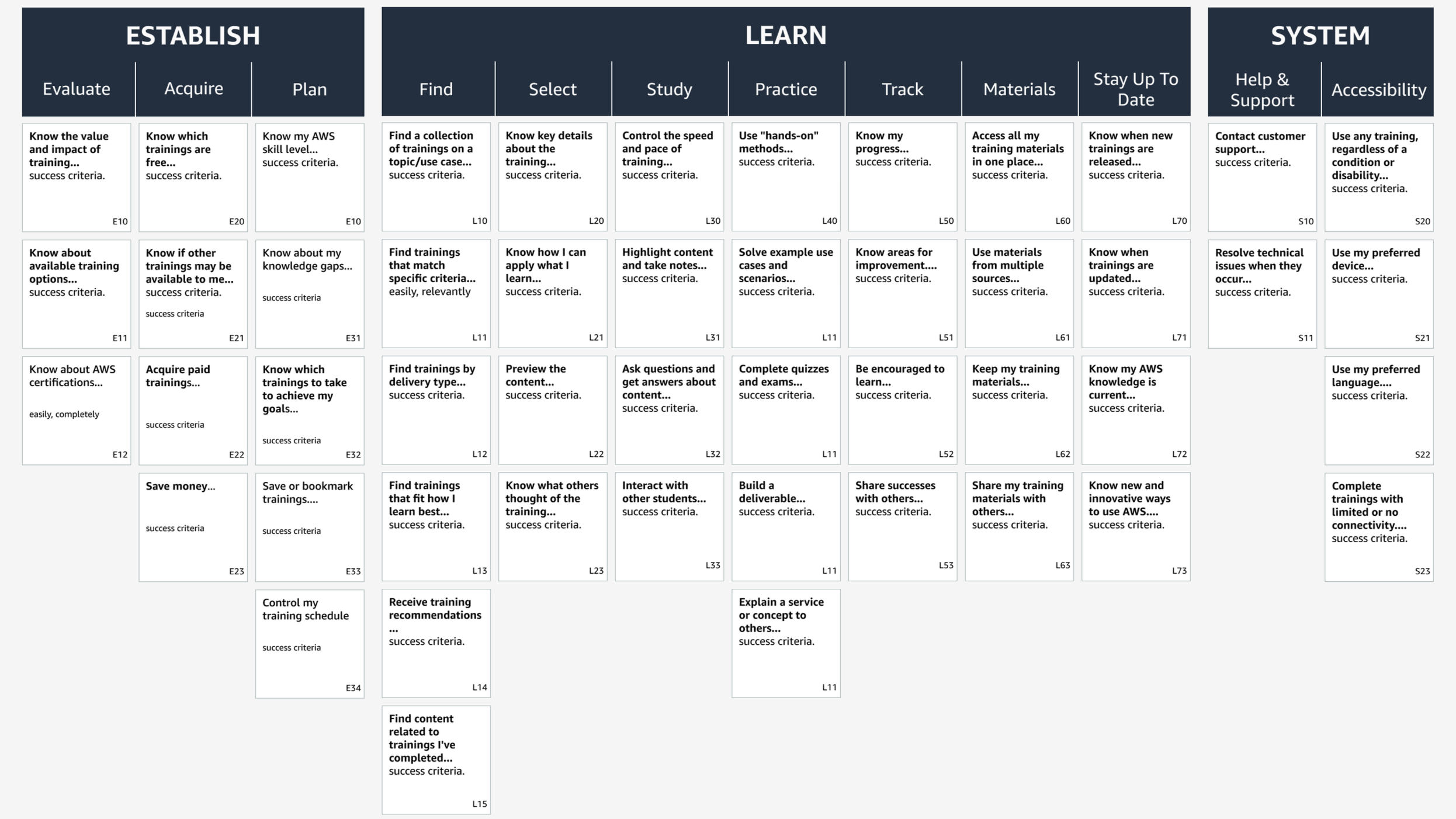

1 — 51 validated customer outcomes

I synthesized 86 existing internal research reports and conducted new interviews across four learner types. This surfaced 63 outcomes representing what customers were actually trying to accomplish. A validation study with 1,200 customers narrowed those to 51 CXOs, organized into four behavior categories. Each CXO included a job statement, success criteria, and behavioral and attitudinal metrics tied to specific product tasks.

2 — Diagnostic measurement replacing aggregate scores

The framework's core innovation was precision. Instead of a single satisfaction number, each CXO measured task completion rates, time on task, and product analytics alongside difficulty ratings, confidence scores, ease of use, and usefulness — all connected to specific customer tasks rather than general sentiment.

3 — Embedded into how the organization already worked

A framework only delivers value when it's woven into how teams already work. CXO metrics were integrated product proposal templates, quarterly business reviews, roadmap planning, and executive reporting. Additionally, I built a searchable database of 1,000+ customer insights tagged by outcome, segment, and product — so teams could find evidence without submitting a research request.

Results

51 validated customer experience outcomes

86% organizational adoption (44 of 51 outcomes measured quarterly)

Product teams aligned to a shared measurement system

~$400K revenue traced to framework-informed product decisions

1,000+ searchable customer insights referenced in ~70% of yearly product proposals

Beyond the metrics, the way the organization made decisions changed. Prioritization conversations that once relied on intuition now started from shared data. Product managers could justify investment with evidence tied to specific customer outcomes rather than aggregate trends. And leadership could look across all products and make allocation decisions based on measured outcome gaps.

What this made possible

With a shared measurement system in place, the organization had something it had never had before: a diagnostic vocabulary. Teams could identify which customer outcomes were underperforming, trace those gaps to specific product flows, and measure whether their interventions actually worked.

The quarterly cadence meant measurement wasn't a one-time event but a continuous feedback loop — each cycle refining both the products and the organization's confidence in its own decision-making. The framework's durability, grounded in customer needs rather than product features, ensured it remained relevant as the portfolio evolved.